It’s easy to focus on the exorbitant price and questionable market performance of Apple Vision Pro, but what is undeniable is the stunning technological achievement driven by VisionOS 2.

Apple unveiled the update to its mixed reality platform during WWDC 2024, promising new gestures, an updated guest mode, more visual experiences like a Bora Bora environment, and immersive videos via Safari.

But perhaps no announced update is more eagerly awaited than the creation of spatial images from conventional 2D images.

The platform is still in beta development and is scheduled to ship as a full update sometime in September, but I’ve tried it out and am now happy to talk about another notable achievement from Vision Pro.

The new image conversion feature in the VisionOS 2 dev beta goes far beyond simply doubling an image and displaying a slightly distorted version of the same photo for one eye.

Traditional spatial, or stereo, photography typically involves taking two photos about one eye apart to replicate our 3D vision capabilities. But turning a single flat digital photo into a spatial one is something else entirely. Apple’s machine learning imaging system can analyze a photo – almost any image – down to the pixel level.

Apple’s understanding of subjects and objects in a photo is well known. On iOS, the lock screen can separate a photo’s subject from the background enough to show, for example, your head above the lock screen clock. This lets you pull your pup out of a photo and put his furry self in an iMessage.

Spatial photos in Vision Pro combine image analysis with the system’s excellent stereo imaging capabilities to create a stunning effect.

To test it out, I chose a series of photos that I thought best illustrated Insta-Stereo’s capabilities. I chose photos of me around town, me with my wife on a trip, some of my favorite pictures of Charley, my mother-in-law’s Yorkie, and one image that’s over 50 years old: a rare photo of me and my siblings with my grandparents (on my father’s side).

It’s not a complicated process, I put these pictures in an album on my phone and then Airdropped them to the Vision Pro headset.

I started by selecting one of my photos, a picture of the dog in my mother-in-law’s yard. In the top left corner of the photo viewer window, there is now a small 3D cube that you can select to convert the photo into a spatially compatible image. Once you do that, the system appears to scan the image and select the subject(s) and background. A second later, the original image is replaced with a 3D image.

Charley was standing in front of the bushes behind him. When I moved my head, the perspective changed ever so slightly. His fur was well defined; it didn’t look like it had been cut off or cut and pasted. It looked natural, but with real dimension. I switched to immersion mode, where the frame fades out and the photo moves toward you. Charley looked real enough to pet.

This was the case with all the pictures I chose, but the one that really surprised me was the 50-year-old picture of my family. I didn’t have great expectations for this picture. It was, after all, a photo of a small 5″ x 6″ photo.

I repeated the conversion process and was a little speechless: yes, the resolution was lower than the other images, but that was more due to the source than the spatial 3D process, but I had never seen a family photo like that before.

My long-dead grandparents seemed almost tangible again, and there, at the foot of the couch, sat me as a young person. I looked real, but like someone I barely recognized. I had seen this picture countless times, but suddenly I noticed details I had missed, like the fact that my little sister, sitting on my grandfather’s lap, was holding a fresh dollar bill. The grandparents always brought money; I had somehow forgotten that.

I’ve written about the power of these spatial images before, but applying them to any old photo takes things to a new level. The technology can actually be applied to a variety of otherwise flat images, like screenshots, photos of movie posters, and album covers. The system can analyze and convert pretty much anything.

I noticed with all image conversions that some clipping occurs during the process. It’s not a lot, but for example when I converted a photo of the Starship Enterprise from Star Trek (from a trading card), the border around the image was lost, so the edges of the saucer or main hull filled my field of view.

VisionOS 2 automatically selects 10 photos per day to convert, but you can also select the ones you want.

Better control

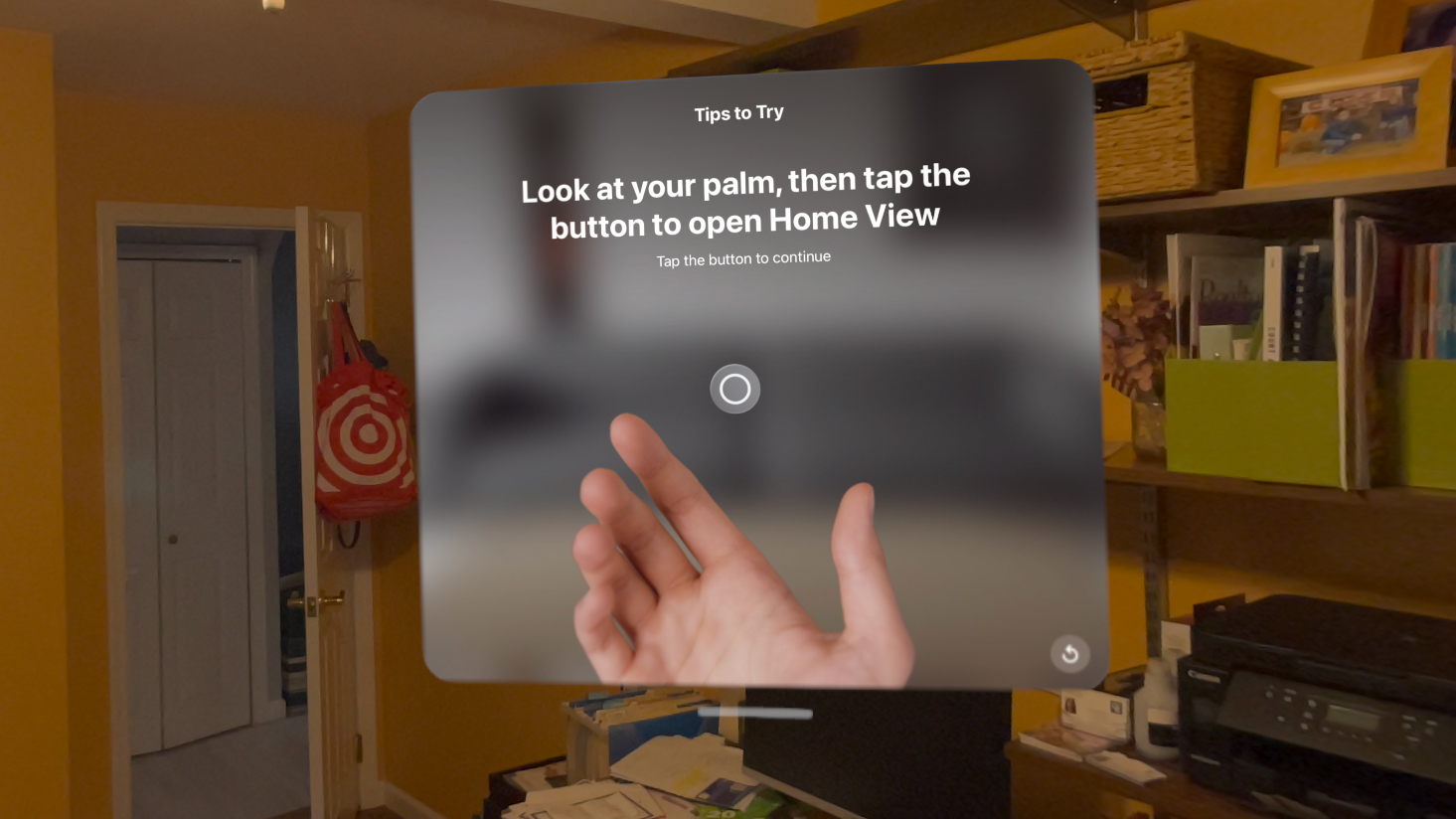

There are plenty of other, less emotive updates to visionOS 2, such as a new and better way to access the home screen, status bar, and control panel. I can now hold out my hand, palm up, in an almost pleading gesture, look at my palm, and a little circle icon appears. Then I tap my fingers once to bring up the home screen; no more pressing the digital crown (if you don’t want to do that).

When the circle appears, I can also turn my hand over and tap my index finger and thumb together to bring up the control panel. This last action is much easier than the current VisionOS method, which requires looking way up to see a small green icon and then tapping with your fingers. If I make the same gesture and keep my fingers pressed, I can access a new volume control, which is again easier than using the Digital Crown.

The home screen is now customizable. I was able to press and hold an app icon to reorganize the apps however I wanted. For example, I was able to move the Home app from the Compatible folder to the main screen.

visionOS 2 also adds a new keyboard search feature that lets a real Magic Keyboard or connected Mac keyboard shine through even in full immersion mode. I immersed myself in the new Bora Bora environment (and immediately wanted to be on a real beach), but when I moved my hands toward my MacBook Air, a window popped up around my MacBook’s keyboard. visionOS knows where it is and makes a little immersion cutout. This is pretty clever and could make the system more useful for multitaskers.

Safari also received a small upgrade with the ability to take embedded panoramic photos (assuming websites add them) and turn them into 360-degree panoramas in the headset. I was also able to watch YouTube videos in the same way I could watch videos in the Disney+ Vision Pro app, for example: as floating screens in an immersive environment.

Apple remains keen to give development partners, and especially enterprises, more reasons to develop and integrate Vision Pro into their business processes.

There’s a new volumetric API that makes it easier for developers to create 3D objects and interactions that don’t have to take up the entire Vision Pro environment. I tried out an app that allowed me to manipulate a realistic-looking ground speeder without losing access to Safari.

Tabletop API allows developers to create more detailed tabletop experiences and games. I saw a 3D chess board that also included elements of the game Clue, complete with tiny and very realistic looking little rooms built into the virtual tabletop game board. The chess pieces looked especially real.

Apple hopes that a mixed-reality approach using the Vision Pro’s main camera will entice companies to develop training systems, for example. I used one such system to check that a garbage disposal mounted under the sink was in good working order. Big orange virtual arrows floated around the device, showing me where to look, while guidance messages gave me instructions on what to do. When I pushed the garbage disposal aside, the arrows followed.

There are also numerous other updates available to make Vision Pro even more useful, easier to use, and more inspiring. None of this can change the $3,499 price tag or the fact that you still have to wear a 600-gram computer in front of your face to take advantage of the updates. But if you use Vision Pro, this update might just put a smile on your glasses face.

You can now install visionOS 2 on your own Vision Pro headset, but keep in mind that this is still a development beta and bugs and changes are possible until the final version.